Computational Psychiatry Needs Systems Neuroscience

This piece was originally published in The Transmitter. I am adapting it here with some additional context for readers of this newsletter.

Illustration by Harol Bustos

“Dr. Halassa, you do not understand, but it is a new system that Elon Musk invented. Starlink… This is what they implanted in me, and I am getting zapped. You need to treat the zapping, not give me these ridiculous medications.”

As a practicing psychiatrist, I must have heard a version of this narrative hundreds of times. Delusions are one of the most common symptoms of schizophrenia, along with hallucinations, disorganized thinking, social withdrawal and flattened affect. Psychiatry has medications that sometimes control hallucinations, but delusions and other symptoms often persist despite treatment.

The idea of a delusion is quite profound; it touches on the very notion of what it is to believe something and how to adjust the belief when the evidence suddenly reverses. As a systems neuroscientist, when I hear these stories, I cannot help but wonder how they emerge. What type of brain process can possibly make someone believe something with absolute certainty and make it impossible for them to even consider alternative interpretations?

A framework I find useful here, and one I develop more extensively in a separate project I’m working on, is the coalition framework: the brain as a collection of distinct systems running in parallel, each representing the world differently, and each with its own strategy and timescale (for an early post about this, please see here). For many problems we face, multiple systems are active simultaneously, and the coherence of behavioral output (as well as appraisals of it) depends on mechanisms that arbitrate among them. Cognition, therefore, depends on coordination across these systems. The exact form of these systems is a work in progress, but it is clear for example that learning algorithms in cortex vs. basal ganglia vs. cerebellum are distinct, so the idea is that combinations of these microcircuit elements give rise to distinct macrocircuits with different algorithmic features adapted to distinct problems. We have recently discovered that associative thalamic circuits may arbitrate between different frontal areas adapted to learning associations from scratch versus adapting based on previously learned environmental structure (Wang et al., 2025).

This coalition framing has implications beyond psychiatry: if you take seriously the idea that we are all combinations of subsystems, then it may become less puzzling why we behave very differently in different social settings. One can think of social forces as external inputs that may synchronize different subsystems across people, giving rise to groupthink phenomena from components that wouldn’t be expected to do so under other models of function. However, I digress and the post here is not about this topic. Instead, it is about the loss of coordination in individuals and its relationship to mental health conditions.

Indeed, seeing someone in a psychotic state is among the most compelling evidence for this framework. The patient I described at the outset was not confused about what he believes is true. He was quite certain. Could this be related to changes in arbitration mechanisms that normally allow competing interpretations to be weighed appropriately? Did one system output become dominant in a manner that has become impervious to change? Understanding how that happens requires understanding what the coordination mechanisms are and where they live in the brain. That is where we turn to computational psychiatry and systems neuroscience to get some insight.

Computational psychiatry is a field that takes seriously the idea that mental symptoms reflect specific failures in the computations the brain performs. The field relies on tasks that isolate specific processes, such as how uncertainty is estimated, how evidence is weighted over time, or how competing memories are resolved. When someone with schizophrenia (or other psychiatric condition) performs these tasks, the pattern of errors can reveal something that clinical observations alone cannot. For example, in tasks requiring reasoning, we can ask if people use different strategies or take distinct steps in evaluating options that allow us to infer why they are more likely to exhibit delusional thinking. Readers of this newsletter will recognize that this level of description is algorithmic. It is the idea that a mental process can be captured by a set of operations that transform task relevant inputs to latent variables that drive behavioral outputs. Two patients carrying the same diagnosis may have distinct algorithmic failures that natural language, the substrate of clinical communication, would describe identically.

But to target algorithmic-level changes, one needs knowledge of the circuits that implement them. That is where systems neuroscience comes into play: the study of neural circuits and systems that give rise to specific operations. It has given us the notion of receptive fields, population dynamics and task-relevant manifolds, grounding abstract computational ideas in measurable neural activity. In essence, computational psychiatry needs systems neuroscience to make a difference in the real world.

Let’s make this very concrete: consider a decision-making task in which a cue guides a person’s attention to one of two targets, and then he or she makes a choice based on specific features of the attended target. When the cue is unambiguous, what to pay attention to is straightforward, and therefore decision-making is easy. But making the cue ambiguous renders attention and subsequent choice harder, a procedure that can be precisely titrated to quantitatively study how the brain handles uncertainty in decision-making. Instead of observing unstructured behavior and trying to figure out what went wrong, we can precisely isolate one specific operation, how beliefs get updated when evidence is uncertain, and measure how that process differs in someone with schizophrenia.

Real power emerges when we use the same task structure across species, testing humans, other primates and mice on versions of the same cognitive challenge. Although details may differ, the core computational problem remains constant, enabling us to observe similar neural operations across species, and how they implement different steps of the same algorithm. Crucially, we can do experiments in animals that would be impossible to conduct in humans, such as recording from specific cell types, manipulating their activity and decoding what information they carry. Because the animal is doing something constrained and measurable, we can link what is seen in the brain directly with what the animal computes.

Psychiatric neuroscience was developed outside of this tradition; researchers have tried to use animal models to capture the full mess of real-world symptoms: forced swim tests supposedly model despair, and elevated plus mazes supposedly model anxiety. But these tasks are too unconstrained, making it impossible to decode what specific computation has gone wrong, because the behavior reflects too many things at once. Some human neuroimaging has the opposite problem. For example, resting-state connectivity can show which brain regions are active together, but it cannot really tell what they are computing or communicating. Both approaches skip over the algorithmic level where the dysfunction driving symptoms lives.

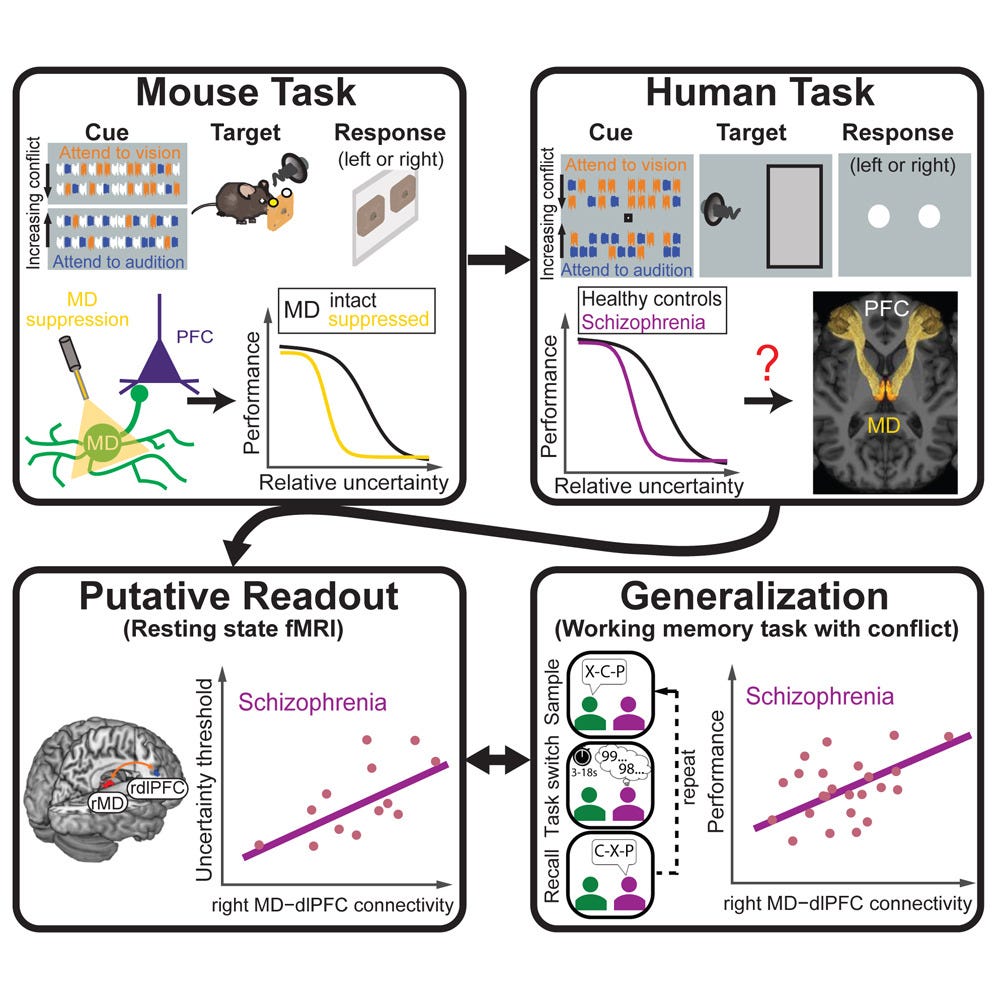

Serendipitously, and using systems neuroscience approaches, my lab stumbled upon a domain in which well-controlled behavioral tasks in animals shed light on a brain-behavior relationship in schizophrenia. Over the past ten years, we studied how cognitive control is implemented in neural circuits, initially in mice and then in tree shrews. In these tasks, cues guide attention to targets and vary from trial to trial. Research in mice identified how neurons in the prefrontal cortex turn cues into attentional bias signals, which in turn influence processing in sensory circuits.

We discovered that the thalamus is critical for this prefrontal operation. The thalamus is traditionally thought to relay information from the periphery, but our experiments showed that the mediodorsal thalamus is turned inwards, extracting various signals from the vast data within the prefrontal cortex itself. This allows it to identify the right context and help the prefrontal cortex adapt when the context switches. It also identifies the degree of uncertainty in the cues it receives, and we found that it slows prefrontal decision-processing in a manner commensurate with these estimates. In essence, the thalamus provides a natural mechanism for filtering noise in decision-making.

When we developed this approach, we were not studying schizophrenia, but others had shown that people with schizophrenia exhibit altered resting-state functional connectivity between the thalamus and prefrontal cortex. These changes had no behavioral correlates, however. Once we understood what computation the mediodorsal thalamus performs, we could ask whether that same computation might be impaired in people with schizophrenia. Remarkably, we found that those with the disorder are worse at handling ambiguous cues: they perform normally when cues are clear but fail disproportionately as uncertainty increases. This behavioral pattern mirrors what we see when we optogenetically inhibit the mediodorsal thalamus in mice performing the same task. The deficit also correlates with thalamocortical resting-state functional connectivity in people with schizophrenia.

Graphical abstract of Huang, Wimmer et al., 2024

So we have convergence: the same behavioral deficit, analogous circuits and similar computational architecture across species. That gives us evidence that we have identified a specific computational alteration in schizophrenia, which opens the door to interventions that target the underlying circuits. Instead of broad pharmacological targeting, maybe we could target the circuits that implement noise filtering and contextual inference.

But that is just one piece. Delusions likely involve multiple computational alterations. How does evidence update beliefs? How is uncertainty represented and used? How does the brain assign credit in complex environments? These might be different changes in different people.

What systems neuroscience can help us do now is build tasks that dissect these operations separately. We have developed tasks in which we ask participants to arbitrate between different forms of uncertainty, including those involving sensory inputs and those involving the structure of the environment or its rules. This allows us to study how people and animals manage uncertainty, identify context and assign credit in naturalistic-like conditions.

Early data from this effort have confirmed our original findings from the simpler task: people with schizophrenia have difficulty managing uncertainty. However, it has also provided additional insight into the different types of algorithmic failures that people may experience. The deeper message from this line of work is that the question “why do patients with schizophrenia exhibit delusional thinking” may not have a single answer, and that this may be highly significant. Different people appear to carry different algorithmic failures that converge on similar clinical presentations. Identifying these fingerprints and mapping them onto neural circuitry is our challenge. However, we are optimistic that we (and the field more generally) are on the right track to identify these mechanisms, which will hopefully usher in a new era of precision psychiatry.

Superstar MD/PhD student Sahil Suresh presenting at the Schizophrenia International Research Society Meeting, 2026 in Florence. Early evidence for patient stratification based on an animal-inspired hierarchical decision making task.

If you enjoyed reading this post please consider sharing it with your network. Subscribe if you’d like to be part of the ongoing conversation.

This is a most fascinating exploration. I have had patients with delusions that were as obvious as your opening example e.g. devoting an astonishing amount of time cutting a medication tablet into sixteen intricate, specific slices after reading that "divided doses" through the course of a day was "superior" to simply taking the pill as directed. Or the Naval officer who literally drove cross-country in adult diapers, stopping only for fuel, to a base on the west coast believing she was followed the entire trip by Navy investigators because she was a "whistleblower," and would only speak to the base Commandant (and was arrested). Or the patient who reported the same woman as a clerk at a San Francisco grocery store, a bus driver, a ticket agent at the Mascone Center, a cop in Union Square, and a nurse in the ER, all on the afternoon.

But the unforgettable patients are the ones whose delusion appeared suddenly in the course of discussion, out the blue, and totally unexpectedly and incongruously with anything previously discussed. I was evaluating a Black man in state prison in his late 50's, who was pleasant, impeccably dressed - his clothes were literally pressed and starched, and he told me he had a small "business" laundering and pressing clothing for other inmates to earn money - well spoken, friendly, attentive, and motivated for parole. He had been convicted of manslaughter in a fight where both men were intoxicated, which he described as self-defense. He served 8 of his 11 year sentence with no infractions and was a model prisoner. In the standard questions, I asked the number of times he believed other could hear or control his thoughts, and he said, "Well, never until they put that new stainless steel mirror in my cell." When I asked him to explain, he said, "The installer showed me the back and asked me if it didn't look like a computer mother board. And I could immediately feel they were reading and hearing my thoughts." He continued that their ability to hear his thoughts extended even beyond his physical presence in his cell. In attempting to focus him, as you say on how "making the cue ambiguous renders attention and subsequent choice harder, a procedure that can be precisely titrated to quantitatively study how the brain handles uncertainty in decision-making," he was unable to explain how this happened but simply accepted it. Noting the the hypersensitivity that most inmates have against telling anything to authorities - and I frequently asked parolees in rehab how important they valued telling the truth while in prison, it was always "ZERO!" - I asked him how it was possible to live in a state prison knowing that the corrections officers and others in authority could read all your thoughts? He considered it for a period of time before ironically stating, "I just don't think about it."

To understand this "fixed delusion in the presence of evidence to the contrary" truly would be a gift. I hope you - and others - continue to pursue this in depth. As always, a much appreciated read!

Nice text! As psychiatist and molecular neurobiologist I wonder or any algorithms could be studied in cell models?